AI × DeFi Without Losing Control

Takeaways from the Davos WEF AI Breakfast

In January 2026, I had the pleasure of participating in an AI × blockchain panel organized by CV Labs in Davos during the World Economic Forum. As expected, the discussion quickly converged on the disruptive potential of AI and automation.

That part is no longer controversial.

What was more interesting — and frankly more useful — were the questions that kept resurfacing beneath the hype. Questions about product reality, risk, and whether today’s AI systems are actually ready to operate in financial markets, where expert insights proved invaluable.

Below are the reflections that stuck with me after the discussion.

1. Separating Hype from Real Tech

One recurring theme was how difficult it has become to distinguish between vision and execution. AI has become the default label for almost everything, from LLM-powered apps to autonomous agents, and automated code generators.

From an investor or institutional perspective, the fast pace of technological progress makes due diligence harder than ever. A few signals repeatedly came up as essential from the panelists:

Clear business logic: who pays, when, and why - today, not “eventually.”

Operational clarity: is AI actually running in production or just in demos?

Honesty about on-chain execution: what truly needs blockchain execution, and what does not.

None of these alone separates hype from substance, but without them, it is nearly impossible to distinguish between AI hype and real tech.

2. AI, LLMs, Agents — Not The Same Thing

Another issue is that very different technologies are grouped under the same “AI” umbrella, especially when it comes to LLM and AI Agents. They are not interchangeable and let’s break this terms down:

Large Language Model (LLMs) excel at language, abstraction, and reasoning in text space. These are probabilistic models trained to predict the next word in a sequence of text. This training objective allows LLMs to excel at language understanding, abstraction, summarization, and reasoning in text space. In practice, LLMs are highly effective at interpreting unstructured information, generating explanations, and acting as an interface between humans and complex systems. However, LLMs do not reason about economic incentives, long-term outcomes, or hard constraints unless these are explicitly imposed. As a result, while they can describe financial systems convincingly, they should not be trusted with direct control over capital without additional safeguards.

AI agents act autonomously, observing environments and executing actions.

AI agents are systems designed to observe an environment, select actions, and execute those actions autonomously in a continuous loop. Agents are defined by their ability to act, not by the specific model they use internally.

LLMs are often embedded inside agents to support planning or reasoning, but an agent’s defining feature is autonomous execution.Deep learning underpins prediction and pattern recognition.

It refers to a broad class of neural network models capable of learning complex, non-linear relationships from data. These models underpin both LLMs and many predictive systems used in finance.

Deep learning is particularly effective for tasks such as price forecasting, volatility estimation, risk scoring, and anomaly detection. While these models provide valuable signals, they are fundamentally predictive rather than decision-making systems. They inform decisions, but they do not define policies or objectives on their own.Reinforcement learning (RL) focuses on sequential decision-making under uncertainty. It is a framework focused on sequential decision-making under uncertainty.

An RL system learns a policy by interacting with an environment, taking actions, observing outcomes, and optimizing a long-term objective through feedback. This makes reinforcement learning especially relevant for financial systems, where actions have delayed effects and short-term optimization can lead to long-term fragility.

These distinctions matter because financial systems do not fail due to a lack of automation, but because automation is applied without sufficient understanding of decision quality and systemic effects.

LLMs without constraints can generate plausible but unsafe recommendations,

AI agents without control can amplify volatility, and predictive models without policy design can create false confidence.

Robust financial infrastructure requires not just faster execution, but decision-making systems that are constrained, adaptive, and aligned with long-term stability.

3. AI × Blockchain: where does it really make sense?

A surprisingly pragmatic consensus emerged around this question. Does AI need to run entirely on the blockchian ? In most cases, AI does not need to run fully on-chain. However, integration of blockchain and AI is particularly valuable because:

Permissionless access to blockchains and its state (data)

Composability of blockchain smart contracts

Transparent rules of settlement and execution

This is precisely why AI agents are gravitating toward DeFi rather than traditional finance. DeFi removes gatekeepers and APIs that normally constrain automation. Yet, not all AI logic must be stored as a blockchain smart contract.

4. AI x DeFi and

why institutions do not put €1m into them (yet)

The panel covered a broad range of applications of A, from early-stage experiments to systems already live. Examples included:

Semantic-based investment strategies, such as AI agents analyzing social media or news signals and translating them into portfolio decisions (one example discussed came from 21Shares).

Automated liquidity management in DeFi, where agents dynamically rebalance positions in AMMs like Uniswap.

Algorithmic risk and rate management in lending markets, adjusting parameters faster than human governance ever could.

One moment during the discussion stood out to me. Despite all the enthusiasm, none of the panelists said they would confidently allocate €1 million to an AI-only investment strategy today.

Why? My personal, subjective take is that we are missing a control layer. AI is excellent at automation. LLMs are powerful at reasoning in constrained contexts.

Agents can execute predefined strategies at scale. However,

They still struggle with robust reasoning under uncertainty.

They often end up following sentiment rather than understanding fundamentals.

In volatile systems like DeFi lending or liquidity provision, small mistakes compound fast.

True progress will come when we add a layer of intelligence, constraints, and risk-aware control on top of automation, not just faster execution.

This reminded me strongly of earlier DeFi discussions around bad liquidity management and fragile lending markets. Automation alone is not enough; adaptive, well-governed automation is what matters.

5. Example of Liquidation Death Spiral

One of the most instructive examples is the liquidation death spiral in DeFi lending markets. We recently discussed it at IC3 Winter Retreat with the representatives of Fidelity.

The mechanism is simple but dangerous:

A price shock triggers liquidations.

Liquidations push prices further down.

Falling prices trigger more liquidations.

Liquidity disappears exactly when it is needed most.

Nothing is “broken” at the smart-contract level. The failure emerges from static risk parameters interacting with dynamic markets. Most DeFi lending protocols still rely on fixed or slowly updated interest-rate curves and manual governance for parameter changes (DAO). These designs work in calm markets. Under stress, they amplify feedback loops.

Automation alone does not solve this. In fact, blind automation makes things worse by accelerating the very dynamics that cause instability.

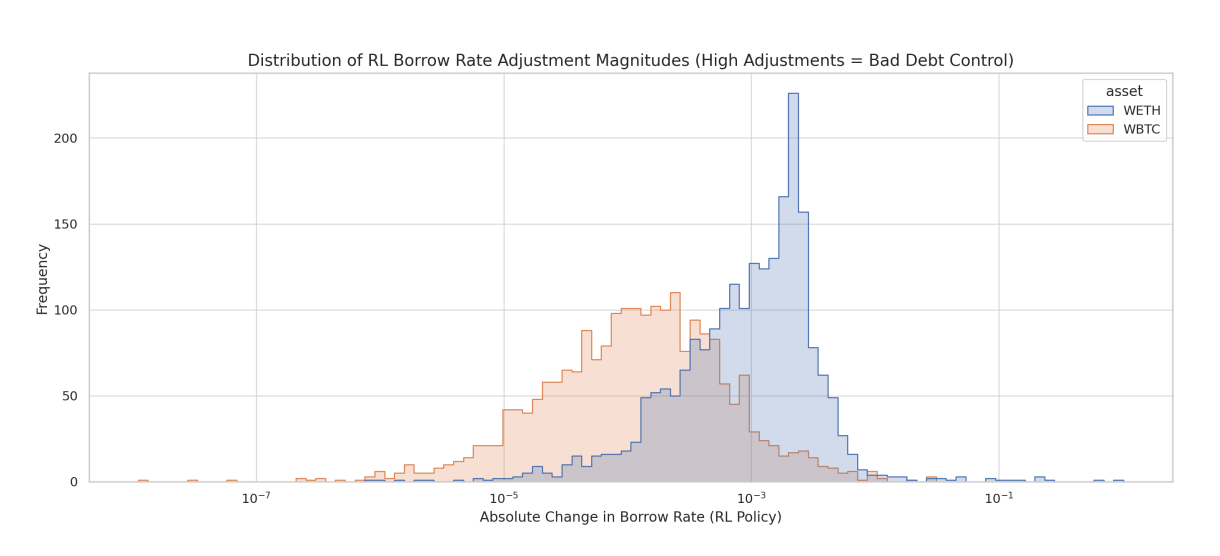

This exact problem motivated my earlier research on AI-driven interest-rate adjustment in DeFi lending protocols. Using reinforcement learning, we explored how adaptive controllers could:

Adjust rates dynamically in response to market conditions

Stabilize utilization during stress

Reduce bad debt accumulation

Slow down liquidation cascades

Unlike static curves, these models learn from historical stress events and adapt continuously.

The key insight was not “AI replaces governance,” but:

AI can act as a real-time risk co-pilot — if it is properly constrained.

6. The Missing Layer: Control

This brings us back to Davos — and to what I believe is still missing in AI × DeFi. AI agents today are strong at execution, automation and following signals.

They are still weak at robust reasoning under uncertainty, understanding second-order market effects, or operating within institutional risk constraints by default

The real opportunity is therefore a control layer:

An AI co-pilot proposing adjustments

A control plane enforcing hard risk limits

Human-in-the-loop oversight

Full auditability and explainability

This layer turns AI from a volatility amplifier into a stabilizing force. This areas becomes important now, because several conditions finally align:

DeFi failure modes are well understood

AI inference is cheap and fast enough to run continuously

Institutions are experimenting on-chain, but only within strict constraints

Market volatility remains the ultimate stress test

2026 is arguably the first moment when AI-assisted, risk-aware DeFi infrastructure is both necessary and feasible.

Closing Thoughts

The excitement around AI × blockchain is justified. Blockchains create a uniquely powerful environment for AI to operate, precisely because of their permissionless nature and open data access. DeFi protocols, as on-chain financial services, are to become the most natural environments for AI agents to act, experiment, and scale.

At the same time, AI agents operating in DeFi need more than LLMs and raw automation. They require clear constraints, robust governance, and accountability by design. Without these, speed and autonomy amplify instability rather than adaptability.

This missing control layer — combining AI-assisted decision-making with hard risk limits and human oversight — is where the next wave of real progress will happen. If this resonates, whether from a tech, research, or institutional deployment perspective, I’m always happy to continue the conversation.